Learning is a process of changing an individual's behavior through interaction with the environment.

In the learning process, emotions play a very important role which gives influence the form of sooner

or later the learning process is carried out. A person's emotions can be identified visually by observing

the expression on a person's face. Facial expression recognition is becoming a research topic in human-computer

interaction, along with the development of computer vision and deep learning techniques. This research explored the deep

learning architecture MobileNet to classify facial emotions.We successfully divided the 5 classes of the emotions.

As a result, in the highest accuracy obtained at a learning rate of 0.0001% of 88.492%

We also introduce a novel dataset called USK-FEMO derived from the junior high school student at SMP Negeri 1 Darul Imarah, Aceh Besar Regency, Indonesia that includes 5 classes: happiness, sadness, anger, surprise, and boredom.

Our goal is to address one of the main challenges which is the need for datasets containing adequate data, especially for students. This image data will be collected manually by taking pictures through a digital camera and collecting 2,250 images where each class is divided into 250 emotions images. This dataset can be used for two tasks: classification and detection to recognize each of the 5 emotion classes

Emotions play a crucial role in the learning process, influencing the manner in which the learning

process is eventually carried out. Therefore, to speed up the learning process requires a classification

system of facial expressions students to detect the psychic condition of students so as to help teachers

in streamline learning mechanisms. Facial expression recognition is becoming a research topic in human-computer

interaction, along with the development of computer vision and deep learning techniques. One of the major

challenges is the need for datasets containing adequate data, especially for students.

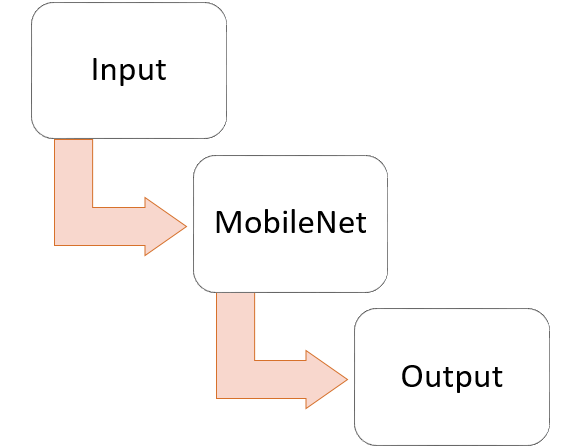

To classify facial emotions, we use a deep learning approach that is CNN and the architecture used is MobilNet.

CNNs are commonly used in the fields of image processing and computer vision to recognize objects in images,

classify image categories, and solve machine vision-related problems..

To classify facial emotions, we use a deep learning approach that is CNN and the architecture used is MobilNet.

CNNs are commonly used in the fields of image processing and computer vision to recognize objects in images,

classify image categories, and solve machine vision-related problems..

The model was built using the Convolutional Neural Network (CNN) method.

MobileNet is implemented in this research for the CNN architecture. The MobileNet architecture is designed

efficiently with two sets of hyper-parameters to construct a very small and low latency model that can be easily

implemented for mobile and embedded applications. MobileNet divides the convolution into depth-wise and point-wise

convolutions to reduce computation in the initial layer.

To accelerate the training of neural networks, multiple layers of the neural network are frozen.

The first five layers are frozen, while the neural network layer remains open (unfrozen). A dropout layer with a rate of 0.2 is added

to increase the generalization model or manipulate the accuracy values to increase accuracy and reduce over-fitting.

As a result, the performance of the model is significantly improved. The fully connected layer is also changed to split

the dataset into five classes.

To accelerate the training of neural networks, multiple layers of the neural network are frozen.

The first five layers are frozen, while the neural network layer remains open (unfrozen). A dropout layer with a rate of 0.2 is added

to increase the generalization model or manipulate the accuracy values to increase accuracy and reduce over-fitting.

As a result, the performance of the model is significantly improved. The fully connected layer is also changed to split

the dataset into five classes.

USK-FEMO is a one of public datasets that is open for everyone. For detailed information about the dataset, please see the tchnical report linked below.

| Categories | Total | Dimensions |

|---|---|---|

| Happy | 255 | 244 x 244 pixels |

| Sad | 255 | 244 x 244 pixels |

| Anger | 255 | 244 x 244 pixels |

| Surprised | 255 | 244 x 244 pixels |

| Bored | 255 | 244 x 244 pixels |

You can download the dataset and code using the link below

You can contact Muhajir via E-mail for question about the dataset and paper and visit our lab web:https://comvis.mystrikingly.com

This is the following publications use our dataset. Please contact us if you are using our dataset and we will add your paper to the list.